Bit byte1/24/2024

Multiples of BytesĪs with bits, this chart can technically continue indefinitely, but most of those measures would be theoretical. Beyond that, greater rates of data transfer are mostly theoretical. Terabit Ethernet is considered the next frontier of Ethernet speed above the 100 gigabit Ethernet that’s currently possible. Of course, the tables above go far beyond yotta – but so far, our technology doesn’t extend much beyond peta. So, binary measures of bits and bytes differ because they are based on a different numbering system. Computers, on the other hand, operate on a binary number system, which is a factor of two. Said another way, SI units are based on factors of 10. This means that a 500 gigabyte hard drive holds 500,000,000,000 bytes. This differs from most other contexts in computer technology, where terms prefixed by kilo, mega and giga, etc., are used according to their meaning in the International System of Units (SI), which is to say, as powers of 1000. If this doesn’t make sense, do the math yourself: 2^10 = 1024. So, a kilobyte is actually 1024 bytes – not 1000 bytes that the kilo prefix suggests.

Bits and bytes are based on binary, while the decimal system is based on factors of 10 (base 10). Bits and bytes do not round nicely into the decimal numbering system.

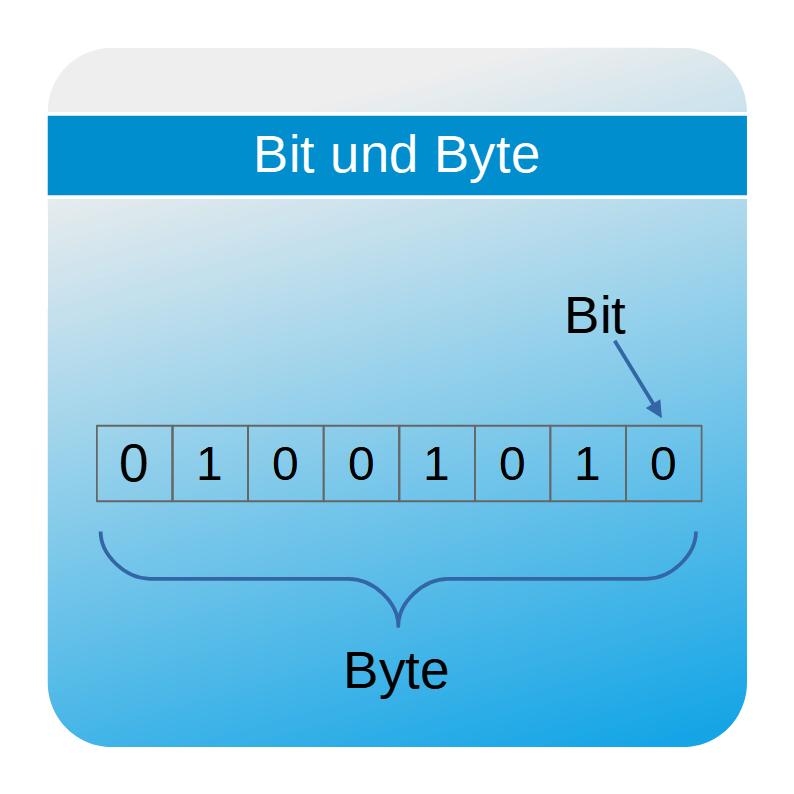

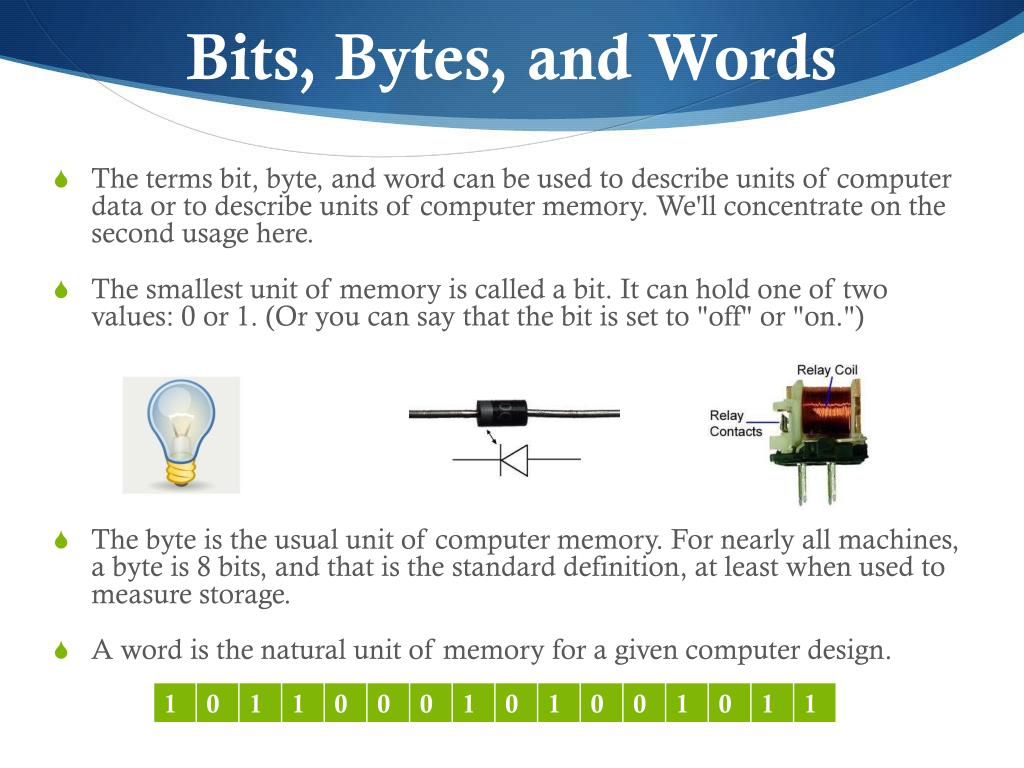

When it comes to computers, terms such as kilobyte (KB), megabyte (MB), gigabyte (GB) – and the abbreviations that go along with them – have slightly different meanings. At any one point in time, the gateway to one of these components could have an “off” or “on” condition at each of the eight pathways. Originally, bytes were created as eight bits because the common physical circuitry at the time had eight “pathways” in and out of processors and memory chips. Bits are grouped into bytes to make computer hardware, networking equipment, disks and memory more efficient. In fact, the term “byte” got its official spelling as a result of concerns that “bite” would accidentally (and incorrectly) be shortened to “bit.” Unfortunately, the spelling change didn’t clear up all the confusion.Ī byte is a collection of bits, most commonly eight bits. If you’re confused by the difference between bits and bytes, you’re not alone. Welcome to the digital age! (Learn more about the old days in The Pioneers of Computer Programming.) Binary information that was once stored in the mechanical position of a computer’s lever or gear is now represented by an electrical voltage or current pulse. The use of bits to encode data dates way back to the old punch card systems that allowed the first mechanical computers to perform computations. A bit is a single unit of digital information and represents either a zero or a one. It is the tiniest building block of each and every email and text message you receive. This is the smallest amount of digital data that can be transmitted over a network connection. Here is a simple explanation about what these measurements mean.Īny basic explanation of computing or telecommunications must start with a bit, or binary digit. This is an important concept for anyone who works in depth with a computer, because these measurements are used to describe storage, computing power and data transfer rates. Yes, we’re talking about bits, bytes and all their many multiples. An area that frequently trips people up are those terms that are used to measure computer data. The terminology that goes with understanding the most basic concepts in computer technology can be a real deal breaker for many technological neophytes.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed